In this video we discuss why skip connections (or residual connections) work and why they improve the performance of deep neural networks. We take a look at the depth problem in neural nets, then we explain the residual block used in the ResNet model and finally explain what improvements it brings to the table.

*Related Videos*

▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬

Why we build deep neural networks: [ Ссылка ]

Why neural networks can learn any function: [ Ссылка ]

*Contents*

▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬

00:00 - Intro

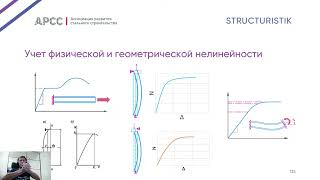

00:11 - Depth Problem

00:46 - Residual Block

01:28 - Residual Connections in DNNs

04:20 - Loss Space

04:48 - Outro

*Follow Me*

▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬

🐦 Twitter: @datamlistic [ Ссылка ]

📸 Instagram: @datamlistic [ Ссылка ]

📱 TikTok: @datamlistic [ Ссылка ]

*Channel Support*

▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬

The best way to support the channel is to share the content. ;)

If you'd like to also support the channel financially, donating the price of a coffee is always warmly welcomed! (completely optional and voluntary)

► Patreon: [ Ссылка ]

► Bitcoin (BTC): 3C6Pkzyb5CjAUYrJxmpCaaNPVRgRVxxyTq

► Ethereum (ETH): 0x9Ac4eB94386C3e02b96599C05B7a8C71773c9281

► Cardano (ADA): addr1v95rfxlslfzkvd8sr3exkh7st4qmgj4ywf5zcaxgqgdyunsj5juw5

► Tether (USDT): 0xeC261d9b2EE4B6997a6a424067af165BAA4afE1a

#skipconnections #resnet #residualconnection