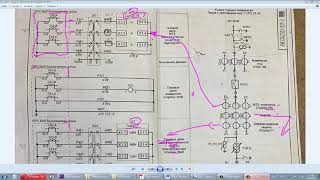

NVIDIA has publish a code set that lets just about anyone run a private ChatGPT on their data. While you can run this on a laptop, we wanted to get the models a little closer to where data resides in many small business settings, the NAS. So we cracked open a QNAP NVMe NAS and dropped in an A4000 to do the dirty work.

There's a bit more to the story though Chat with RTX runs on Windows, so we used QNAP's Virtualization Station to create a Windows VM, then went about installing the packages. This video has all of the detail, the full guide on how we did this is linked below.

QNAP has subsequently added the A4000 to the compatibility list.

Full Guide on QNAP Chat with RTX Config -

[ Ссылка ]

#qnap #nas #ChatWithRTX #ai

AI Greatness? We Slammed a GPU in a QNAP!

Теги

QNAP TS-h1290FXNASAI integrationAMD EPYC 7302PNVMe storageGPU supportSolidigm P5336 SSD737TB capacitydata privacysecurityVirtualization StationNVIDIA RTX A4000GPU passthroughVirtual Machine (VM)RAID5Chat with RTXretrieval-augmented generation (RAG)TensorRTRTX accelerationlocal large language model (LLM)Windows VMAI workflowsSMBSMEdata reductioncost-effective AI solutionsqnapqnap nasqnap ai

![Гелертер верят - Развитая цивилизация существовала до появления людей? [Времени не существует]](https://s2.save4k.ru/pic/pMxzC99_ZkE/mqdefault.jpg)